The Wrong Upskill

Everyone is upskilling. Almost no one is building judgment.

Everyone in partnerships is being told to learn AI.

Most of them are listening. Courses are being taken. Certificates are being earned. LinkedIn bios are being updated.

And most of it won’t matter.

Not because AI isn’t important. It is. But because the training being sold to partnership professionals is disconnected from the work they actually do. It’s information transfer dressed up as skill building.

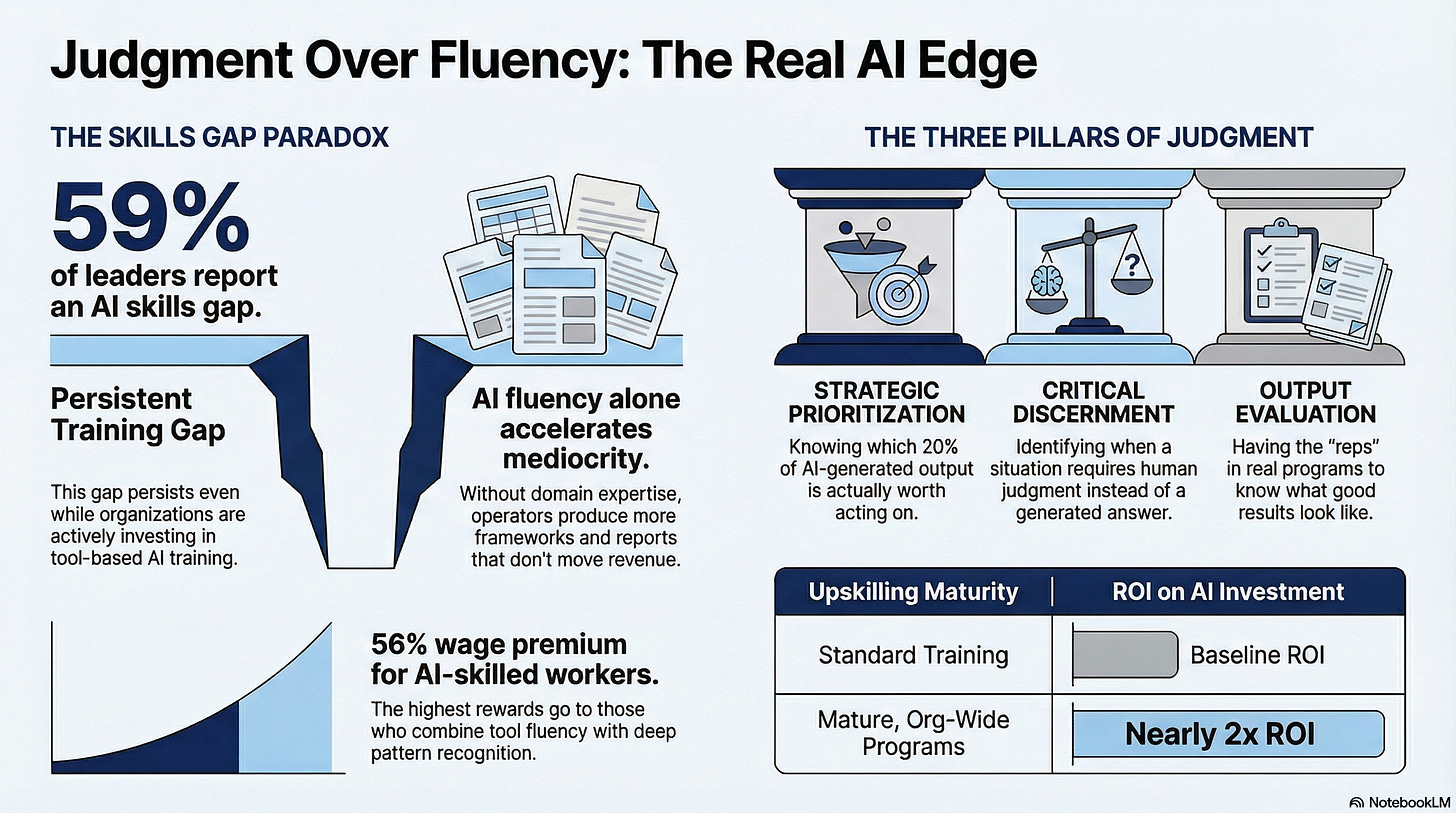

Here’s the proof. 59% of enterprise leaders report an AI skills gap right now, even while actively investing in AI training. Only 35% have a mature, organization-wide upskilling program. And the ones that do? Nearly twice the ROI on their AI investments. DataCamp

More courses are not closing the gap. Something else is.

AI doesn’t have a fluency problem. It has a judgment problem.

You can hand the same tool to two partner operators and get completely different outputs. Not because one knows the tool better. Because one knows the domain.

AI will produce a co-sell strategy, a partner tier framework, an attribution model. It will do it fast and it will sound confident. If you can’t evaluate the output, you can’t use it. And evaluating it requires something no course teaches you: years of knowing what good looks like in this specific function.

That’s domain expertise. It’s the foundation. Without it, AI just accelerates mediocrity.

The second thing is prioritization.

AI expands what’s possible faster than most operators can absorb. The problem isn’t generating output. It’s knowing which 20% of that output is worth acting on.

A partner manager who hasn’t run a real program doesn’t know which metrics actually predict revenue. They don’t know which partner tier structures fail in practice. They don’t know which co-sell plays die in the field.

So they use AI to produce more of everything. More decks. More frameworks. More reports. None of it moves the number.

Prioritization is a judgment call. It requires context your AI doesn’t have and can’t be given through a prompt.

The third thing is discernment. Knowing when not to use it.

This is the one nobody talks about.

Gartner predicts 50% of organizations will require AI-free skills assessments by 2026 Gloat specifically because critical thinking is atrophying from overreliance on generative tools. The muscle weakens if you stop using it.

The operators who stay dangerous are the ones who use AI as leverage, not as a substitute for thinking. They know when a situation requires their judgment and not a generated answer. They catch what AI gets wrong because they’ve seen enough to know when something doesn’t smell right.

That discernment is not a feature of the tool. It’s a feature of the operator.

Here’s what this means for your career.

Workers where AI fluency is explicitly required grew sevenfold in two years. Workers with AI skills command a 56% wage premium over peers in the same role. Gloat

That premium is real. But it is not going to the person who finished the most courses.

It’s going to the operator with ten years of pattern recognition who now runs in half the time. It’s going to the person who knows a bad partner strategy when they see one, can cut a 40-slide deck down to the six slides that matter, and trusts their read on a situation even when the AI says otherwise.

The upskill that matters right now is not a new one.

It’s investing in your own judgment. Getting reps in real programs. Building the domain depth that makes AI useful instead of dangerous in your hands.

Learn the tools. Everyone will.

The ones who win are the ones who bring something to the tools that can’t be downloaded.